#91 Still more final exam questions

In my previous post (here), I discussed two open-ended questions that I asked on a recent final exam. Now I will discuss eight auto-graded, multiple-choice questions that I asked on that final exam. As with last week’s question, my goal here is to assess students’ big-picture understanding of fundamental ideas rather than their ability to apply specific procedures. As always, you can judge how well you think these questions achieve that goal. Also as always, questions that I pose to students appear in italics.

1. Suppose that Cal Poly’s Alumni Office wants to collect sample data to investigate whether Cal Poly graduates from the College of Business differ from Cal Poly graduates from the College of Engineering with regard to average annual salary.

a) What are the observational units? [Options: Cal Poly graduates; Annual salaries; Colleges]

b) What is the response variable? [Options: Annual salary; Which college the person graduated from; Whether or not the average annual salary differs between graduates of the two colleges]

c) Should you advise the alumni office to use random sampling to collect the data? [Options: Yes, no]

d) Should you advise the alumni office to use random assignment to collect the data? [Options: No, yes]

e) What is the alternative hypothesis to be tested? [Options: That the population mean salaries are different between the two colleges; That the population mean salaries are the same between the two colleges; That the sample mean salaries are different between the two colleges; That the sample mean salaries are the same between the two colleges]

This question covers a lot of basics: observational units and variables, random sampling and random assignment, parameters and statistics. I think this provides a good example of emphasizing the big picture rather than specific details. The toughest question is part d), because many students instinctively believe that random assignment is a good thing that should be used as much as possible. But it’s not feasible to randomly assign students to major in a particular college, and it’s certainly not possible to randomly assign college graduates to have majored in a particular college in retrospect.

2. Suppose that a student collects sample data on how long (in seconds) customers wait to be served at two fast-food restaurants. Based on the sample data, the student calculates a 95% confidence interval for the difference in population mean wait times to be (-20.4, -6.2). What can you conclude about the corresponding p-value for testing whether the two restaurants have different population mean wait times? [Options: Smaller than 0.05; Smaller than 0.01; Larger than 0.05; Larger than 0.10; Impossible to say from this confidence interval]

I could have asked students to calculate a confidence interval for a difference in population means. But this question tries to assess a big-picture idea: how a confidence interval relates to a hypothesis test. Because the confidence interval does not include zero, the sample data provide substantial evidence that the population mean wait times differ between the two restaurants. How much evidence? Well, this is a 95% confidence interval, so the difference must be significant at the analogous 5% significance level. This means that the (two-sided) p-value must be less than 0.05.

I’m not usually a fan of including options such as “impossible to say.” But that’s going to be the correct answer for the next question, so I realized that I should occasionally include this as an incorrect option.

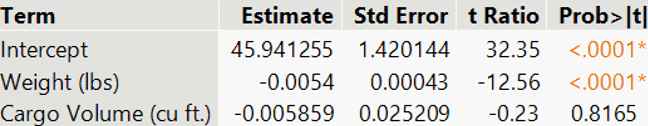

3. The following output comes from a multiple regression model for predicting a car’s overall MPG (miles per gallon) rating from its weight and cargo volume:

If you were to use the same data to fit a regression model for predicting a car’s MPG rating based on only its cargo volume, what (if anything) can you say about whether cargo volume would be a statistically significant predictor? [Options: Impossible to say from this output; Yes; No]

This question tries to assess a big-picture idea with multiple regression. The result of a t-test for an individual predictor variable only pertains to the set of predictor variables used in that model. This output reveals that cargo volume is not a helpful predictor of MPG rating when used in conjunction with weight. But cargo volume may or may not be a useful predictor of MPG rating on its own.

4. Suppose that you select a random sample of people and ask for their political viewpoint and whether or not they support a particular policy proposal. Suppose that 60% of liberals support the proposal, compared to 35% of moderates and 25% of conservatives. For which sample size will this result provide stronger evidence that the three political groups do not have the same population proportions who support the proposal? [Options: Sample size of 200 for each group; Sample size of 20 for each group; The strength of evidence will be the same for both of these sample sizes.]

Students may have recognized this as a situation calling for a chi-square test, because we’re comparing proportions across three groups. But this question is assessing a more fundamental idea about the impact of sample size on strength of evidence. Students needed only to realize that, all else being the same, larger sample sizes produce stronger evidence of a difference among the groups.

5. Suppose that you select a random sample of 50 Cal Poly students majoring in Business, 50 majoring in Engineering, and 50 majoring in Liberal Arts. You ask them to report how many hours they study in a typical week. You calculate the average responses to be 25.6 hours in Business, 32.2 hours in Engineering, and 21.8 hours in Liberal Arts. For which standard deviation will this result provide stronger evidence that the three majors do not have the same population mean study time? [Options: Standard deviation of 4.0 hours in each group; Standard deviation of 8.0 hours in each group; The strength of evidence will be the same for both of these standard deviations.]

This question is very much like the previous one. Now the response variable (self-reported study time) is numerical rather than categorical, so we are comparing means rather than proportions, and ANOVA is the relevant procedure. This question asks about the role of within-group variability, without using that term. Students should recognize that, all else being equal, less within-group variability provides stronger evidence of a difference among the groups.

6. Why do we not usually use 99.99% confidence intervals? [Options: The high confidence level generally produces very wide intervals; The technical conditions are much harder to satisfy with such a high confidence level; The calculations become quite time-consuming with such a high confidence level; The high confidence level generally produces very narrow intervals.]

This question addresses a very basic and fundamental issue about confidence intervals. I believe that if a student cannot answer this correctly, then they are misunderstanding something important about confidence intervals. In the past, I have asked this as a free-response question, and I have asked students to limit their response to a single sentence. I’m not very satisfied with the options that I presented here, so I’m not sure that this question works well as multiple-choice.

7. The United States has about 255 million adult residents. Which of the following comes closest to the sample size needed to estimate the proportion of American adults who traveled more than one mile from their home yesterday with a margin-of-error of plus-or-minus 2 percentage points? [Options: 255; 2550; 25,500; 255,000; 2,550,000]

I ask a variation of this question on almost every final exam that I give. I presented a very similar version in post #21 (here). Just to mix things up a bit, I changed this version to refer to adult Americans rather than all Americans. Mostly for fun, I used options that all begin with the same digits 255, so the question asks about order of magnitude. Many students mistakenly believe that the necessary sample size is larger than the correct response of 2550. Students could perform a calculation to determine this answer, but I have in mind that they should remember that many class examples of real surveys had sample sizes in the range of 1000-1500 people and produced margins-of-error close to 3 percentage points*.

* I suspect that you have noticed that this is the first question for which the correct answer was not the first option given. Of course, students see the options in random order determined by the learning management system (LMS). I find it convenient to enter the options into the LMS with the correct answer first, so I thought I would do the same in this post. I altered that for question #7 just to keep you on your toes.

8. Which of the following procedures would you use to investigate whether Cal Poly students tend to prefer milk chocolate or dark chocolate when offered a choice between the two? [Options: One-sample z-test for a proportion; Two-sample z-test for comparing proportions; One-sample t-test for a mean; Paired t-test for comparing means; Chi-square test for two-way tables]

I included several questions of this “which procedure would you use” form on my final exam. I especially like this one, the context for which I borrowed from Beth Chance. This scenario is the most basic one of all, and the very first inference setting that I present to students: testing a 50/50 hypothesis about a binary categorical variable. Some students mistakenly believe that this is a two-sample comparison. The “between the two” language at the end of the question probably contributes to this confusion. I used that wording on purpose to see whether some students would mistakenly conclude that this suggests a comparison between two groups rather than a comparison between two categories of a single variable.

Last week I received a comment asking whether I worry that my students might read my blog posts to discover some exam questions that I like to ask, along with discussion about the answers. I have to admit that I do not worry about that at all. If my students are motivated enough to read this blog, I’ll be delighted.

I promise that next week’s blog post address something other than exam questions. I always feel like writing about exam questions is somewhat lazy on my part, but I’ve invested so much time in writing and grading these questions that it’s very helpful to double-dip by using them in blog posts as well. The Spring term at Cal Poly begins today, so I’m hoping that will inspire some new ideas for blog posts.