#17 Random babies

Be forewarned that what you are about to read is highly objectionable. The topic is an introduction to basic ideas of randomness and probability, but that’s not the offensive part. No, the despicable aspect is the context of the example, which I ask you to accept in the spirit of silliness intended.

One of the classic problems in probability is the matching problem. When I first studied probability, this was presented in the context of a group of men at a party who throw their hats into the middle of a room and later retrieve their hats at random. As I prepared to present this problem at the start of my teaching career, I wanted to use a context that would better capture students’ attention. I described a hospital that returns newborn babies to their mothers at random. Of course I realized that this context is horrific, but I thought it might be memorable, and I was hoping that it’s so far beyond the pale as to be laughable. On the end-of-course student evaluations, one question asked what should be changed about the course, and another asked what should be retained. For the latter question, several of my students wrote: Keep the random babies! I have followed this advice for thirty years.

If you’d prefer to present this activity with a context that is value-neutral and perhaps even realistic, you could say that a group of people in a crowded elevator drop their cell phones, which then get jostled around so much that the people pick them up at random. That’s a value-neutral and perhaps even realistic setting. It’s also been suggested to me that the context could be a veterinarian who gives cats back to their owners at random*!

* In case you missed post #16 (here), I like cats.

After I describe this scenario to students, for the case with four babies and mothers, I ask: Use your intuition to arrange the following events in order, from least likely to most likely:

- None of the four mothers gets the correct baby.

- At least one of the four mothers gets the correct baby.

- All of the four mothers gets the correct baby.

At this point I don’t care how good the students’ intuitions are, but I do want them to think about these events before we begin to investigate how likely they are. How will we conduct this investigation? Simulate!

Before we proceed to use technology, we start with a by-hand simulation using index cards. I give four index cards to each student and ask them to write a baby’s first name on each card. Then I ask students to take a sheet of scratch paper and divide it into four sections, writing a mother’s last name in each section*. You know what comes next: Students shuffle the cards (babies) and randomly distribute them to the sections of the sheet (mothers). I ask students to keep track of the number of mothers who get the correct baby, which we call the number of matches. Then I point out that just doing this once does not tell us much of anything. We need to repeat simulating this random process for a large number of repetitions. I usually ask each student to repeat this three times.

* I used to provide students with names, but I think it’s more fun to let them choose names for themselves. I emphasize that they must know which baby goes with which mother. I recommend that they use alliteration, for example with names such as Brian Bahmanyar and Hector Herrera and Jacob Jaffe and Sean Silva**, to help with this.

** These are the names of four graduates from the Statistics program at Cal Poly. Check out their (and others’) alumni updates to our department newsletter (here) to learn about careers that are available to those with a degree in statistics.

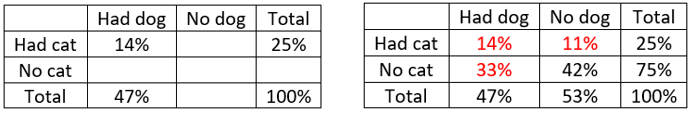

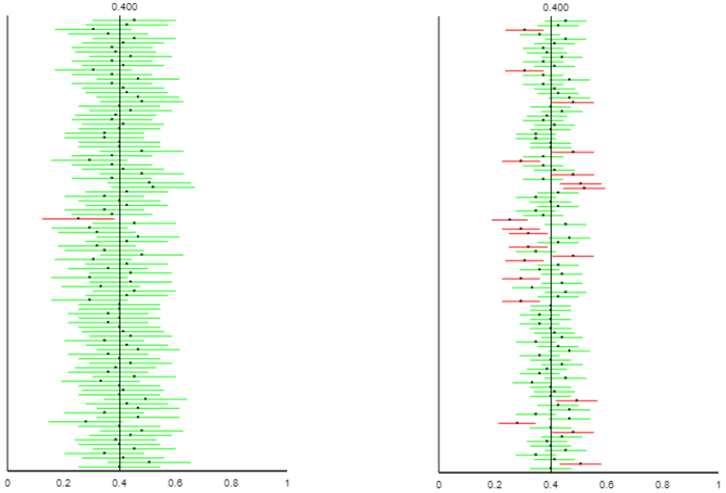

Once the students have completed their three repetitions, each goes to the board, where I have written the numbers 0, 1, 2, 3, 4 across the top*, and students put tally marks to indicate their number of matches for each of their repetitions. Then we count the tallies for each possible value, and finally convert these counts to proportions. Here are some sample results:

* I make the column for exactly 3 matches very skinny, because students should realize that it’s impossible to obtain this result (because if 3 mothers get the right baby, then the remaining baby must go to the correct mother also).

At this point I tell students that these proportions are approximate probabilities. I add that the term probability refers to the long-run proportion of times that the event would occur, if the random process were repeated for a very large number of repetitions. Based on the by-hand simulation with 96 repetitions shown above, our best guesses are that nobody would receive the correct baby in 40.6% of all repetitions and that all four mothers would get the correct baby in 3.1% of all repetitions.

How could we produce better approximations for these probabilities? Many students realize that more repetitions should produce better approximations. At this point we turn to an applet (here) to conduct many more repetitions quickly and efficiently. The screen shots below show how the applet generates the babies (!) and then distributes them at random to waddle to homes, with the colors of diapers and houses indicating which babies belong where. The sun comes out to shine gloriously at houses with correct matches, while clouds and rain fall drearily on houses that get the wrong baby.

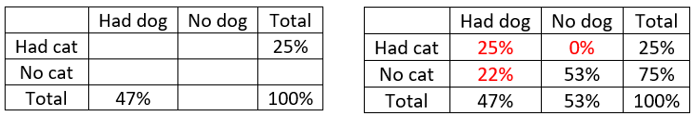

We repeat this for 1 repetition (trial) at a time until we finally tire of seeing the stork and the cute babies, and then we ask the applet to conduct 1000 repetitions. Here are some sample results:

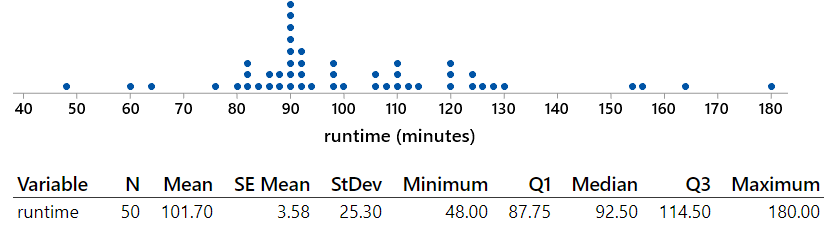

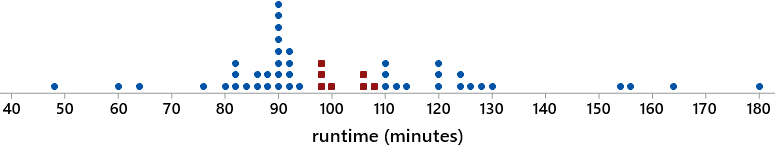

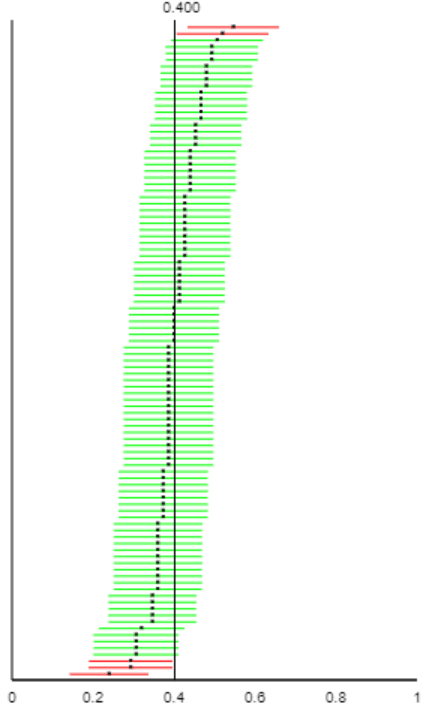

These are still approximate probabilities, but these are probably closer to the truth (meaning, closer to the theoretical long-run proportions) than our by-hand approximations, because they are based on many more repetitions (1000 instead of 96). By clicking on the bar in the graph corresponding to 0 matches, we obtain the following graph, which shows the proportion (relative frequency) of occurrences of 0 matches as a function of the number of repetitions (trials):

I point out that this proportion bounces around quite a bit when there are a small number of trials, but the proportion seems to be settling down as the number of repetitions increases. In fact, it’s not too much of a stretch to believe that the proportion might be approaching some limiting value in the long run. This limiting value is what the term probability means.

Determine the approximate probability that at least one mother gets the correct baby. Indicate two different ways to determine this. Also interpret this (approximate) probability. One way is to add up the number of repetitions with at least one match: (344 + 241 + 46) / 1000 = 0.631. Another way is to subtract the estimate for 0 matches from one: 1 – 0.369 = 0.631. Based on our simulation analysis, we estimate that at least one mother would get the correct baby in 63.1% of all repetitions, if this random process of distributing four babies to mothers at random were repeated a very large number of times.

Can we calculate the exact, theoretical probabilities here? In other words, can we figure out the long-run limiting values for these proportions? Yes, we can, and it’s not terribly hard. But I don’t do this in “Stat 101” courses because I consider this to be a mathematical topic that can distract students’ attention from statistical thinking. The essential point for statistical thinking is to think of probability as the long-run proportion of times that an event would happen if the random process were repeated a very large number of times, and I think the simulation analysis achieves this goal.

I do present the calculation of exact probabilities in introductory courses for mathematically inclined students and also in a statistical literacy course that includes a unit on randomness and probability. The first step is to list all possible outcomes of the random process, called a sample space. In other words, we need to list all ways to distribute four babies to their mothers at random. This can be quite challenging and time-consuming for students who are not strong mathematically, so I present the sample space to them:

How is this list to be understood? I demonstrate this for students by analyzing entries in the first column. The outcome 1234 in the upper left means that all four mothers get the correct baby. The outcome 2134 below that means that mothers 3 and 4 got the correct baby, but mothers 1 and 2 had their babies swapped. The outcome 3124 (below the previous one) means that mother 4 got the correct baby, but mother 1 got baby 3 and mother 2 got baby 1 and mother 3 got baby 2. The outcome 4123 in the bottom left means that all four mothers got the wrong baby: mother 1 got baby 4, and mother 2 got baby 1, and mother 3 got baby 2, and mother 4 got baby 3.

How does this list lead us to probabilities? We take the phrase “at random” to mean that all 24 of these possible outcomes are equally likely. Therefore, we can calculate the probability of an event by counting how many outcomes comprise the event and dividing by 24, the total number of outcomes.

Determine the number of matches for each outcome. Then count how many outcomes produce 0 matches, 1 match, and so on. Finally, divide by the total number of outcomes to determine the exact probabilities. Express these probabilities as fractions and also as decimals, with three decimal places of accuracy. I ask students to work together on this and compare their answers with nearby students. The correct answers are:

Compare these (exact) probabilities to the approximate ones from the by-hand and applet simulations. Students notice that the simulation analyses, particularly the applet one based on a larger number of repetitions, produced reasonable approximations.

Determine and interpret the probability that at least one mother gets the correct baby. This probability is (8+6+1)/24 = 15/24 = .625. We could also calculate this as 1 – 9/24 = 15/24 = .625. If this random process were repeated a very large number of times, then at least one mother would get the correct baby in about 62.25% of the repetitions.

Determine and interpret the probability that at least half of the four mothers get the correct baby. This probability is (6+1)/24 = 7/24 ≈ .292. This means that if this random process were repeated a very large number of times, then at least half of the mothers would get the correct baby in about 29.2% of the repetitions.

Finally, we return to the question of ordering the three events listed above, from least likely to most likely. The correct ordering is:

- All four of the mothers get the correct baby (probability .042).

- None of the four mothers gets the correct baby (probability .375).

- At least one of the four mothers gets the correct baby (probability .625).

Here are some follow-up questions that I have asked on a quiz or exam:

For parts (a) – (c), suppose that three people (Alisha, Beth, Camille) drop their cell phones in a crowded elevator. The phones get jostled so much that each person picks up a phone at random. The six possible outcomes can be listed (using initials) as: ABC, ACB, BAC, BCA, CAB, CBA.

- a) The probability that all three of them pick up the correct phone can be shown to be 1/6 ≈ .167. Does this mean that if they repeat this random process (of dropping their three phones and picking them up at random) for a total of 6 repetitions, you can be sure that all three will get the correct phone exactly once? Answer yes or no; also explain your answer.

- b) Determine the probability that at least one of them picks up the correct phone. Express this probability as a fraction and a decimal. Show your work.

- c) Interpret what this probability means by finishing this sentence: If the random process (of three people picking up cell phones at random) were repeated a very large number of times, then …

For parts (d) – (f), suppose instead that six people in a crowded elevator drop their cell phones and pick them up at random.

- d) Would the probability that all of the people pick up the correct phone be smaller, the same, or larger than with three people?

- e) Which word or phrase – impossible, very unlikely, or somewhat unlikely – best describes the event that exactly five of the six people pick up the correct phone?

- f) Which word or phrase – impossible, very unlikely, or somewhat unlikely – best describes the event that all six people pick up the correct phone?

Answers: a) No. The 1/6 probability refers to the proportion of times that all three would get the correct phone in the long run, not in a small number (such as six) of repetitions. b) There are four outcomes in which at least one person gets the correct phone (ABC, ACB, BAC, CBA), so this probability is 4/6 = 2/3 ≈ .667. c) … all three people would pick up the correct phone in about 2/3 (or about 66.7%) of the repetitions. d) Smaller e) Impossible f) Very unlikely

I like to think that this memorable context forms the basis for an effective activity that helps students to develop a basic understanding of probability as the long-run proportion of times that an event occurs.

P.S. As I’ve said before, Beth Chance deserves the lion’s share (and then some) of the credit for the applet collection that I refer to often. Carlos Lima, a former student of Beth’s for an introductory statistics course, designed and implemented the animation features in the “random babies” applet.